The Back Story

It’s 2019, a Friday afternoon in October, and I’m driving home from school. It’s been a tough day and my brain is absolutely cooked from making 8,000 decisions during my Math 8 classes and giving a cumulative exam in Enhanced Math 1. Seems like giving a test should make for an easy day, as you don’t have to do much, but that’s not the case. Stress levels in students are high. With stress and high expectations comes the willingness to compromise morals and desire to cheat. My attention must be laser focused to make sure students are working with integrity. It’s…not fun. I know there are other ways to assess students, but the most authentic assessment of each student’s ability is to assess them independently (as far as I have found, anyway).

Then there’s the grading. Before switching to Standards Based Grading, we used a traditional points based system, assigning point values to each question, then deducting points from a question if work or formatting was incorrect. With about 100 students taking a test that is about 20 questions long, that’s examining 2,000 test items, most of which have multiple steps of work. I might give 1 or 2 well-crafted multiple choice questions, but 90% of the exam is hand written work with many steps to inspect. When I stack up all the exams, shove them in my messenger bag, and toss the bag in the car, the ride home feels so daunting, knowing I must spend the next 8-10 hours grinding.

Having already gone over the way I am grading student work now using the 4-point rubric, let me just say that it is so much better than itemizing point deductions for each question like I used to. I would drive myself crazy trying to determine if something was minus 1, minus 2, or more. I even got to the point where I was deducting one tenth of a point on certain questions, which in hindsight was absolutely insane. Like, what was I doing???

Target Specific Assessments

One of the best changes we made this past school year was how we assess our students. Before 2020 we would give one large assessment each month, which was always cumulative up to that point. That meant that at the end of February the students would get the “February Test”, which could have any topic on it they learned from August until about mid-February. We emphasized more recent material, and the old stuff was relegated to a few questions on the essential Learning Targets. The test was always worth 100 points, and we used a year-long gradebook. By the end of the school year the gradebook had about 1,200 points in it (including the monthly tests, quizzes, and homework).

The rationale was that we wanted students to maintain their skills throughout the year, instead of simply learning something for a short time and then never recalling it again because it would never be assessed again. While I agreed with this premise, the downside was that these tests were very stressful for the students, usually took up an entire block period to administer, and took an extremely long time for me to grade. Each exam would have around 20 questions on it of varying Depths of Knowledge, so grading around 170 of them each month was mentally exhausting.

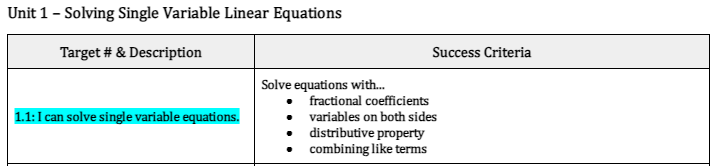

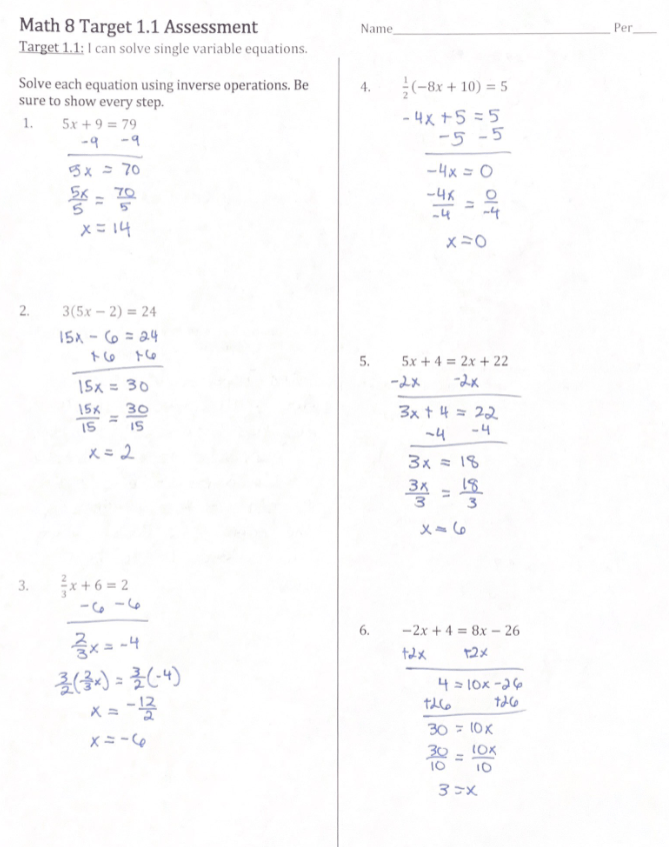

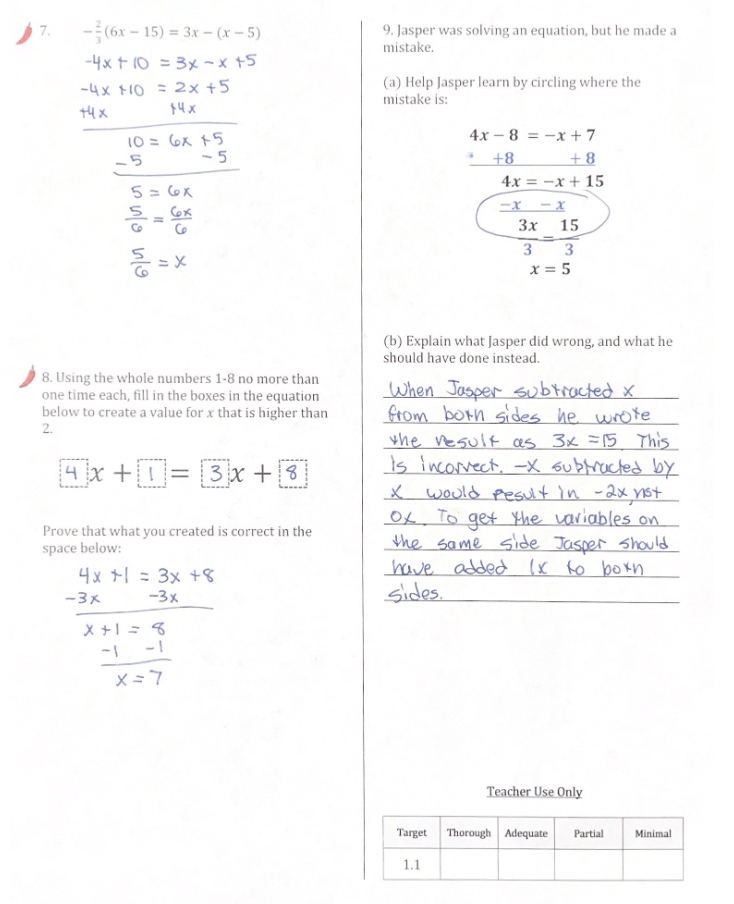

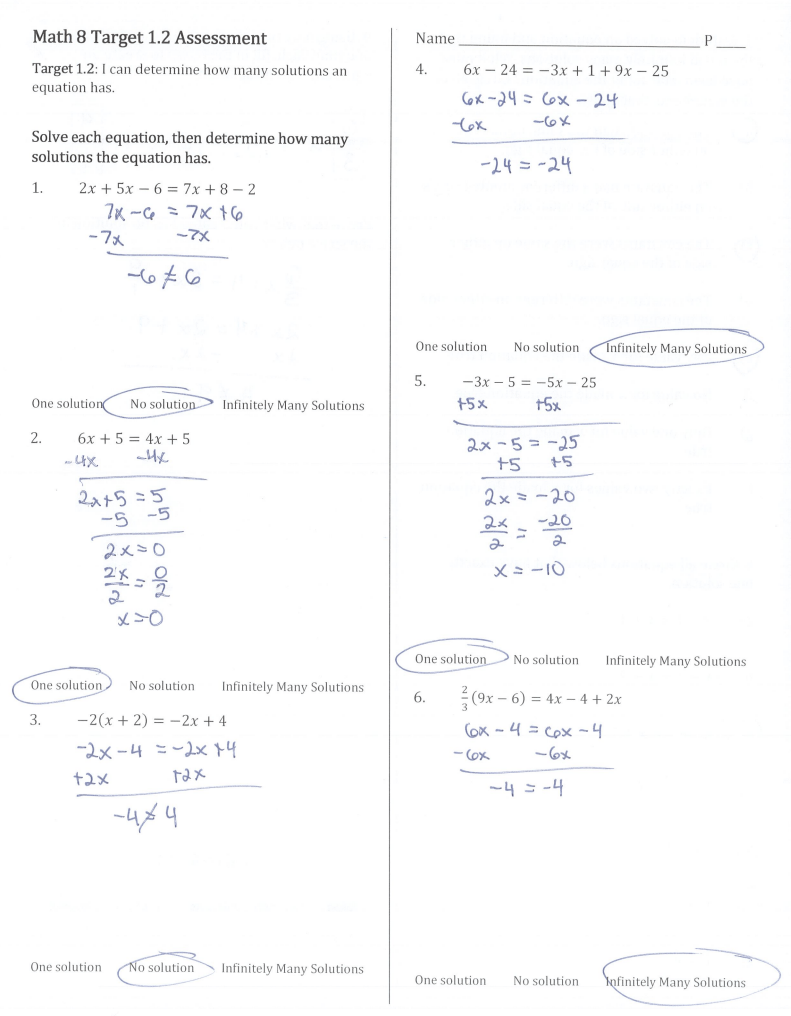

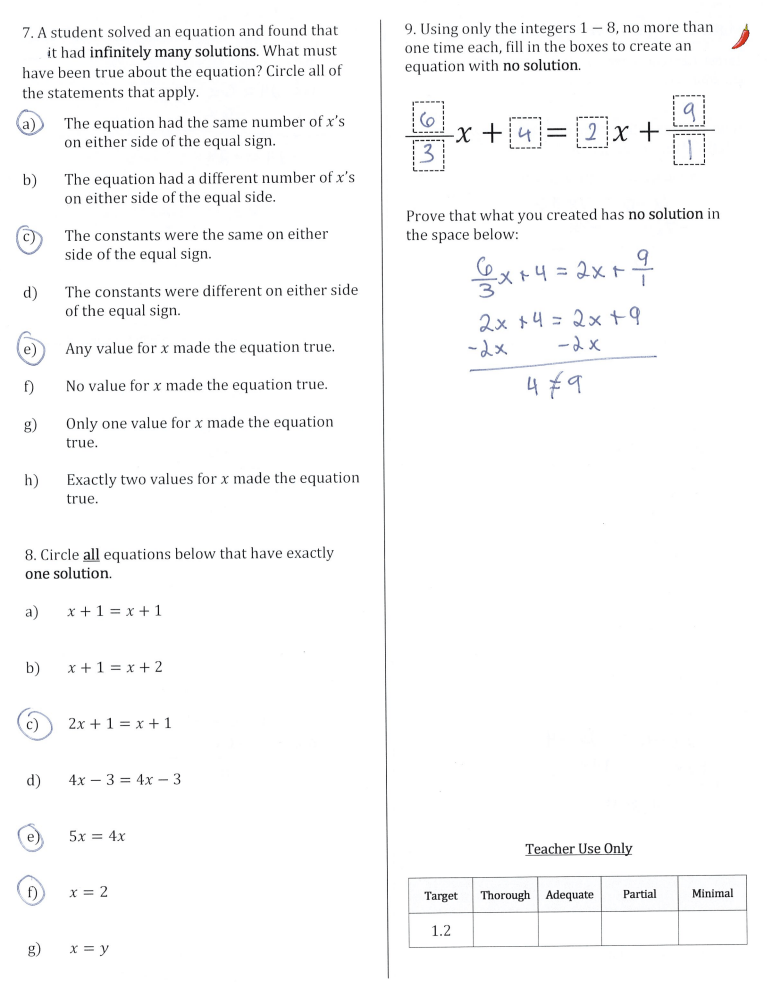

So instead we switched to more frequent Target specific assessments, focusing on only 1 to 2 Targets each. The assessments were much shorter, able to be completed in a 51-minute period by most students, and each Target could be covered by a variety of questions at different levels of rigor. We included spicy peppers to indicate to students which questions we considered more challenging, and those were the ones they should get correct to be considered having “Thorough” understanding of the Target. Here is an example of an assessment I gave last year in Math 8:

The Benefits of Target Focused Assessments

In 8th grade we gave 14 different assessments that covered 20 of the Learning Targets for the year. This meant that I graded assessments more frequently, but the assessments were much quicker to complete. Whenever I assessed Math 8 I was able to grade both class periods in under one hour, usually on the same day I gave the assessment. I could literally never do that before. Many times students would take the assessment on Friday and I could hand it back to them on Monday. During the days of grading a cumulative test it might take me a week or more to finish marking everything, therefore the feedback took longer and was less valuable.

One of the best results from the more Target focused, smaller assessments was that students were not as stressed out or overwhelmed. Since they were shorter and more focused, students were able to finish them in a reasonable time period, and students with IEP’s and 504 plans did not need to use their time accommodations as often. Additionally, with the retake policy we adopted, students knew that they always had a second chance to take a different but similar version of the exam, so if they just weren’t feeling it the day of they test, they always had the change to try again.

Giving these shorter assessments also gave me more flexibility on the day of the test. Since most students would finish with additional time, I was able to give them some more interesting tasks to do once they were finished. I now post Open Middle problems at my thinking stations, Non-Curricular Thinking Tasks, extension problems from previous Targets, or desmos activities that preview the next Target we are going to learn. Assessment day is now a “show me what you know, then go find something you are interested in” kind of day, rather than a stress-fest of feverishly working until the bell rings.

This isn’t to say that every student was instantly successful the first time, or that my assessment results were amazing across the board. In Part 3 I will look at how students did overall, how they reflected on their own results, and whether the retake system worked for all students. See you next time!